Introducing Span's AI Effectiveness suite, powered by agent traces

Introducing Span's AI Effectiveness suite,

powered by agent traces

Introducing Span’s AI Effectiveness suite: A new foundation for better AI outcomes

Introducing Span’s AI Effectiveness suite: A new foundation for better AI outcomes

Span Team

•

Over the last few months, we’ve been studying AI’s impact on engineering teams. Our work made one thing clear: just because your team is using AI, doesn't mean they're using it effectively.

Teams have pushed hard on AI adoption. What they still lack is a practical way to understand how developers are working with AI, where friction shows up, and how to improve day-to-day workflows across the org.

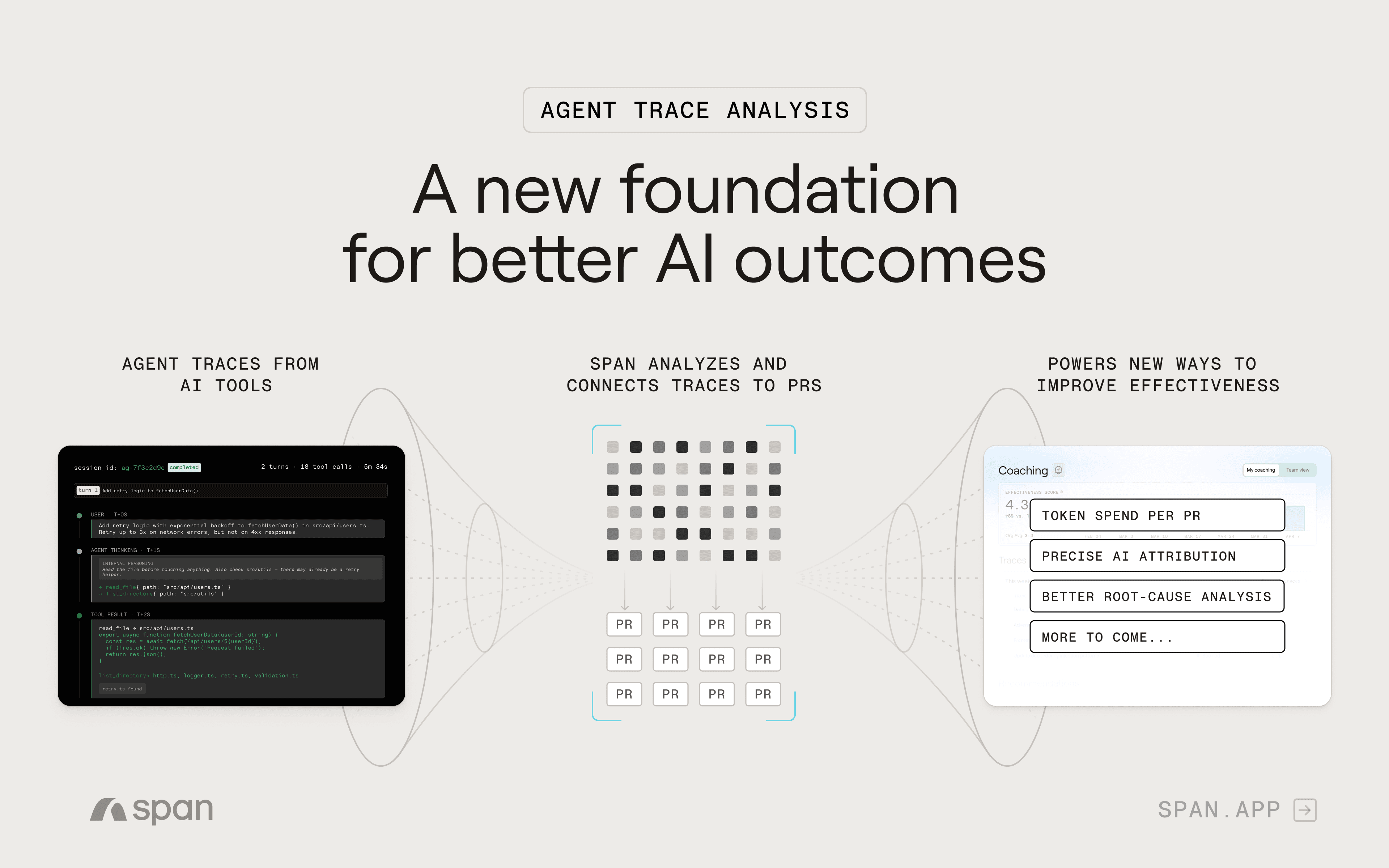

Today, we’re introducing Span’s AI Effectiveness product suite, built on a fundamentally new signal: agent traces.

A new way to understand AI effectiveness

Agent traces create a new foundation for understanding how AI-assisted work unfolds. They capture the underlying interaction history inside tools like Cursor and Claude Code: prompts, tool calls, file edits, token usage, reasoning steps, and more.

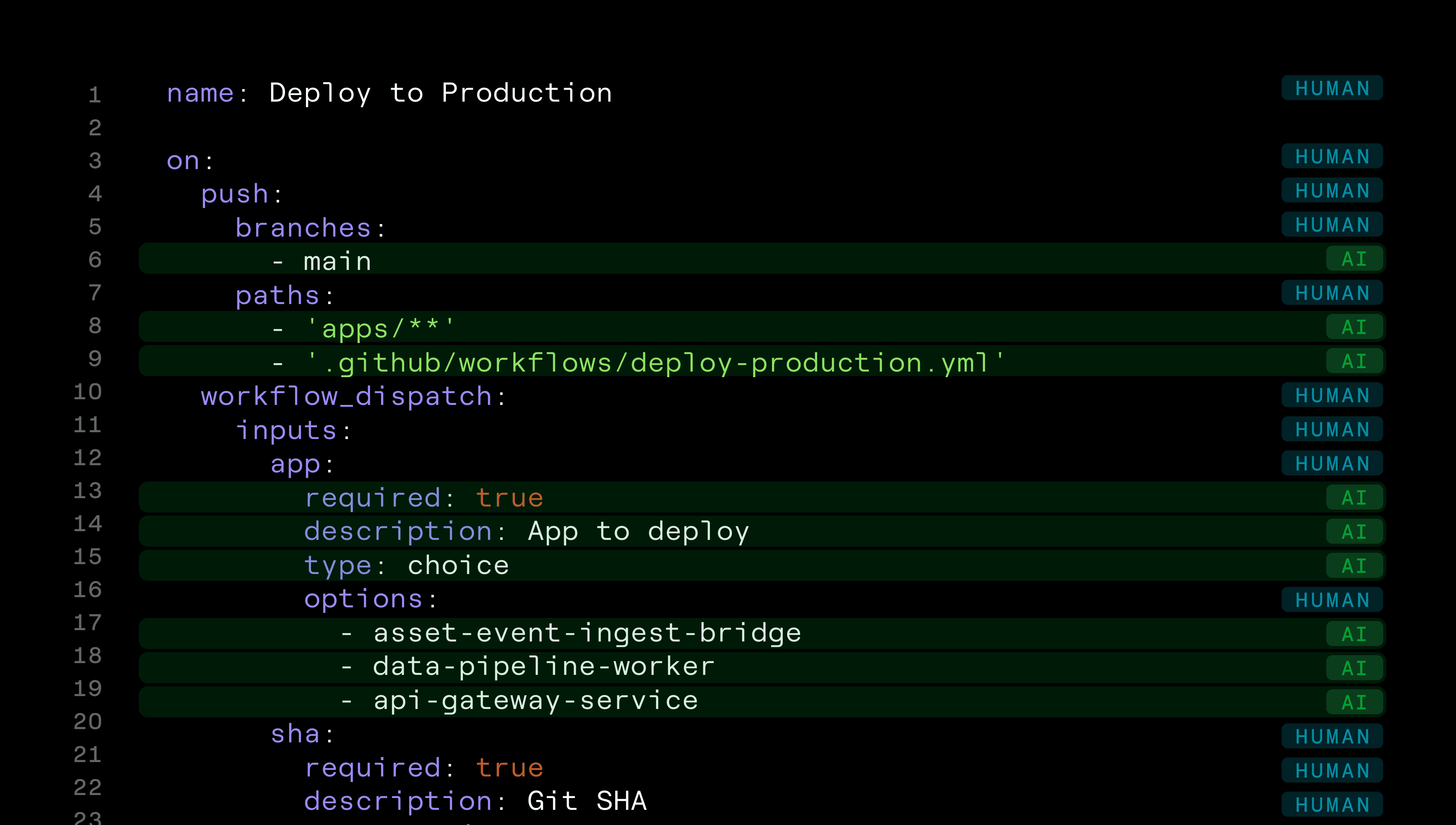

Span captures these traces, redacts sensitive data, and correlates them to pull requests using algorithms that identify which file edits and trace segments belong to each PR—connecting session-level AI activity to the code that ships.

Span also applies agent evals to those traces. It brings a familiar DevEx idea into AI workflows: asking structured questions of agent interactions and synthesizing feedback across thousands of sessions. That gives teams a more direct way to understand where AI workflows are breaking down, how patterns vary across developers and teams, and where to focus next.

A source of truth for developer-agent interactions

Agent traces give teams a single place to understand how AI-assisted work is unfolding.

Inside Span, those traces are pulled into one system of record and connected to pull requests, creating a new layer of observability for AI-assisted development. That gives teams a clearer way to trace AI-assisted work to shipped code and drill down to the underlying session when deeper investigation is needed.

That observability enables a few immediate applications:

Token cost per PR

See the true cost of AI-assisted work at the pull request level, making it easier for leaders to understand where spend is concentrated and how it rolls up.

Better root-cause analysis

If something goes wrong, teams can investigate the traces behind the resulting code changes and understand how the interaction unfolded.

Precise AI attribution

Interaction history gives Span a more direct way to understand the lineage of code in a PR, and which specific lines were generated by AI, strengthening downstream analysis across the Span platform.

What comes next

What agent trace analysis unlocks is not just more visibility, but a set of product opportunities that can help teams improve AI effectiveness in ways that work in real-world environments.

Over the next few weeks, we’ll introduce the first applications built on this foundation, each aimed at a gap in the market that still hasn’t been solved: how to make AI effectiveness measurable, how to turn that understanding into continuous improvement, and how to improve the environments agents depend on to work well.

Agent traces give Span a more direct way to understand AI contribution and a stronger foundation for helping teams move beyond adoption and build real proficiency.

Next post in this series: The Effectiveness Scorecard

Everything you need to unlock engineering excellence

Everything you need to unlock engineering excellence