Introducing Span's AI Effectiveness suite, powered by agent traces

Introducing Span's AI Effectiveness suite,

powered by agent traces

Quality in the Age of AI

Insights

AI Doesn't Write Buggier Code. It Writes Bigger PRs.

AI Doesn't Write Buggier Code. It Writes Bigger PRs.

Span Research Team

•

When you pull just the raw numbers, AI-assisted PRs look worse than mostly human-written ones. The narrative seemingly writes itself: AI generates sloppier code, and the bugs prove it.

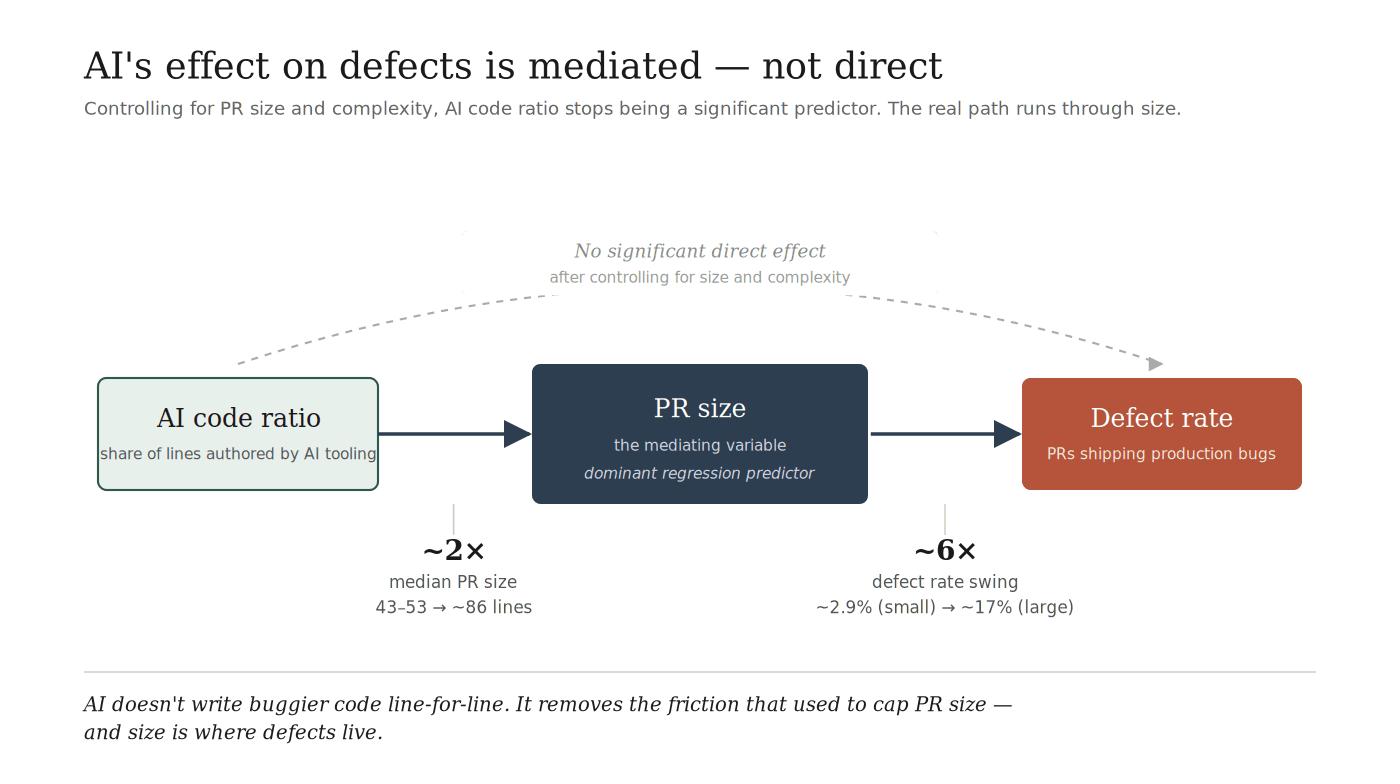

But a different story appears when you run a multivariate regression. Control for PR size and complexity, and AI code ratio stops being statistically significant. What does become significant is the role PR size plays in code quality for teams across the board.

Span's Analysis

We ran a regression across 248,099 pull requests from a selected sample of engineering organizations, with defect attribution drawn from merged PRs linked to production incidents. The model included AI code ratio, PR size, complexity score, cycle time, and reviewer count. The outcome was whether a PR introduced a production defect.

Two variables survived with significant p-values: PR size and complexity. AI code ratio did not.

PR Size Is the Dominant Predictor

The effect is not subtle. Defect rate by PR size:

PR Size | Defect rate |

|---|---|

Small (tens of lines) | ~2.9% |

Large (1,000+ lines) | ~17% |

A roughly 6x swing from one end of the distribution to the other. And this pattern holds across every organization in the dataset. Some orgs handle large PRs better than others — likely through stronger testing infrastructure or review discipline — but within any given org, larger PRs are reliably buggier than smaller ones. The slope varies. The direction doesn't.

Complexity tracks alongside PR size as the other significant regression variable. The two often travel together, but they're distinct: a 50-line change to a state machine is a different animal than a 500-line config update. Both matter. Neither is AI code ratio.

What AI Actually Does

This is where the story turns.

AI-assisted PRs (above 5%) are materially larger than mostly human-written ones (0-5%):

Median PR lines | |

|---|---|

Mostly human-written PRs | 43-53 |

AI-assisted PRs | ~86 |

Roughly double. And larger PRs are buggier. So the AI → defects relationship is real, but the arrow runs AI → PR size → defects, not AI → defects directly. AI's effect on quality is mediated through the size of the work it produces. This holds true even when normalized on a defect rate per line basis.

This reframes the entire AI quality debate. The question "does AI write buggier code?" presumes a direct effect that the data doesn't support. The better question is: "does AI change the shape of the work in ways that make defects more likely?" And the answer is yes — through size.

Why This Finding Makes Sense

AI doesn't produce worse code line-by-line. It removes a friction that used to cap PR size.

Writing 200 lines of code used to cost something. The engineer had to type it, shape it, think through it. When the marginal cost of producing code drops, the marginal PR grows. Engineers ship 200 lines where they would have shipped 50.

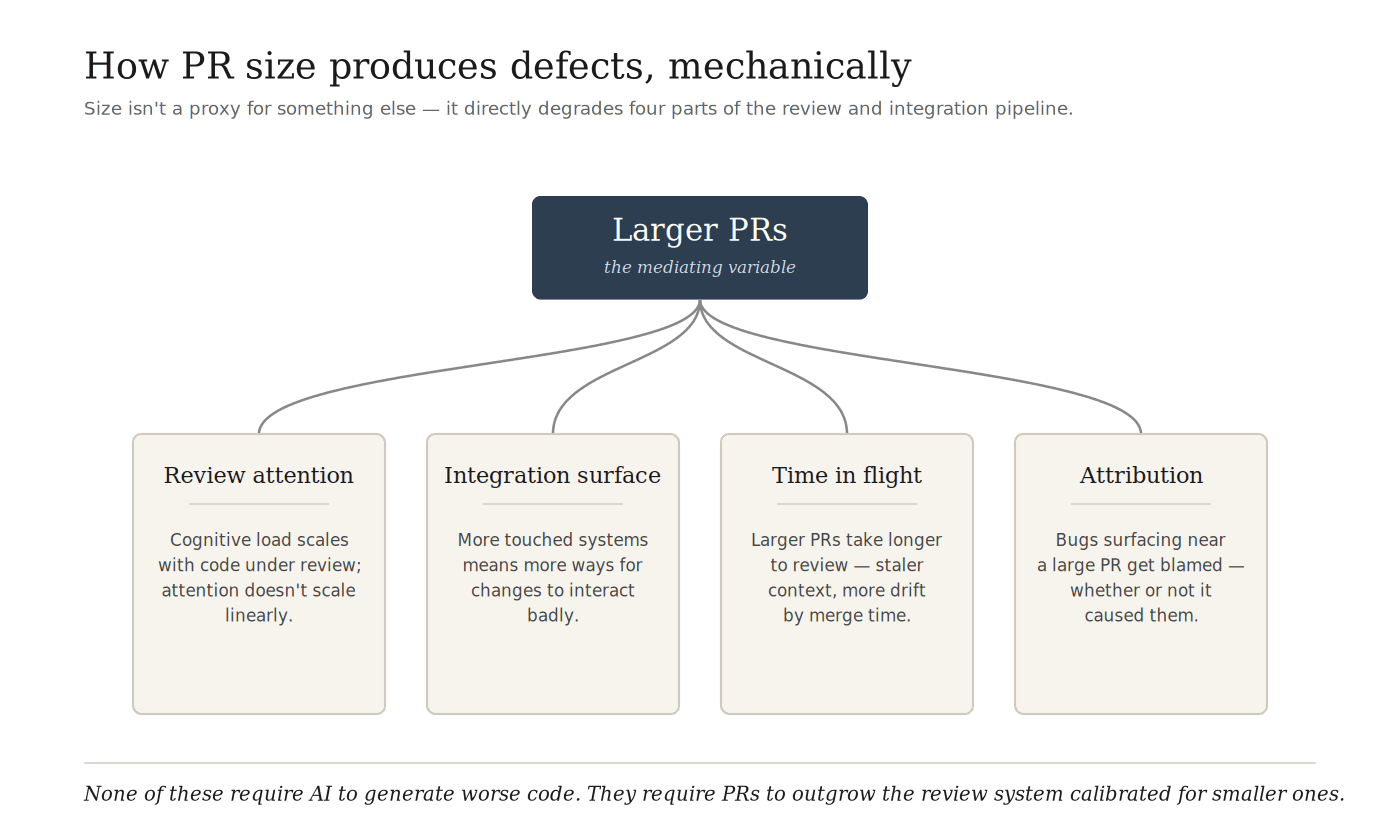

Larger PRs then introduce a cascade of secondary effects:

Reviewers skim more and catch less. Cognitive load scales with the amount of code under review, and attention does not scale linearly.

Integration surface area grows. More touched systems means more ways for changes to interact badly.

Time-in-flight extends. Larger PRs take longer to review, which means staler context at merge and more drift between what the author intended and what the system now looks like.

Attribution blurs. When a bug surfaces near a large PR, the PR tends to catch the blame whether or not it caused the defect.

None of this requires AI to generate worse code. The mechanism is structural: AI changes what a "normal" PR looks like, and review systems calibrated for the old system don't catch as much in the new one.

Connecting to the Wider Cohort

This pattern also sits underneath what we found in the cohort analysis (see: Autonomous Teams Ship Cleaner AI Code). AI Champions and AI Risk Zone teams adopt AI at similar rates, but Champions maintain tighter PR-size discipline while Risk Zone teams let size drift. The gap is small at the cohort median (86 vs. 91 lines) but compounds with cross-team review and cycle time into a measurable defect-rate difference.

The cohort piece described what disciplined teams do. This one explains why that discipline matters in the first place. PR size isn't one factor among many — it's the mechanism through which AI touches defect rate at all, for any team, in any cohort.

3 Things Your Org Can Do About PR Size

Set and enforce a PR size ceiling

The 1,000-line PRs driving the 17% defect rate shouldn't exist. Most teams tolerate them because splitting feels like overhead. The data says the overhead is worth it. Pick a number — 400 lines, 500 lines, whatever fits your domain — and treat it as a hard limit, not a soft guideline.

Instrument PR size before instrumenting AI usage

Teams rolling out AI tooling often measure adoption: what percent of engineers are using it, what percent of lines are attributed to AI. These are interesting but not actionable. Median PR size, measured before and after AI rollout, tells you whether your workflows are absorbing the change or compounding it. If median PR size doubles, your quality baseline will shift whether or not you notice.

Treat AI-assisted PRs as a signal to review harder, not faster

The temptation with AI-generated code is to trust it because it reads clean. Well-structured, plausible, syntactically correct. The data says AI-assisted PRs are larger, and larger PRs are where defects hide. The review heuristic that used to work for a 50-line human PR is not the right heuristic for an 86-line AI-assisted one.

A Note On Scope

The regression identifies correlation with controls, not causation. PR size could be a proxy for unmeasured variables that we didn't include in the model. We think the mediation story is the most parsimonious explanation, and the directional stability across organizations supports it, but a single org's data could look different.

"AI code ratio" in our analysis is measured by IDE and editor telemetry. It captures lines attributed to AI tooling at the moment of authorship, not the quality of how that code was prompted, reviewed, or edited before commit. A PR marked 40% AI-generated could have been substantially refined by the engineer; one marked 5% could have been AI-suggested end-to-end and mostly deleted. The telemetry gives us a useful signal, not a clean experimental variable.

The Bottom Line

The AI quality debate has been stuck on the wrong variable. AI-assisted code isn't meaningfully buggier than human-written code on a like-for-like basis. What AI changes is the shape of the work, which determines quality. Teams that keep PRs small capture the speed benefit without paying the defect tax. Teams that let PR size drift pay twice: once in review load, once in production.

If you're measuring AI's impact on your engineering org by looking at defect rates alone, you're measuring a derivative of the thing that actually matters. Watch the lines.

Analysis based on 248,099 pull requests across a selected sample of engineering organizations, with defect attribution drawn from merged PRs linked to production incidents. Multivariate regression controlled for PR size, complexity score, cycle time, reviewer count, and AI code ratio. Directional findings validated across organizations in the sample.

Everything you need to unlock engineering excellence

Everything you need to unlock engineering excellence