Introducing Span's AI Effectiveness suite, powered by agent traces

Introducing Span's AI Effectiveness suite,

powered by agent traces

Insights

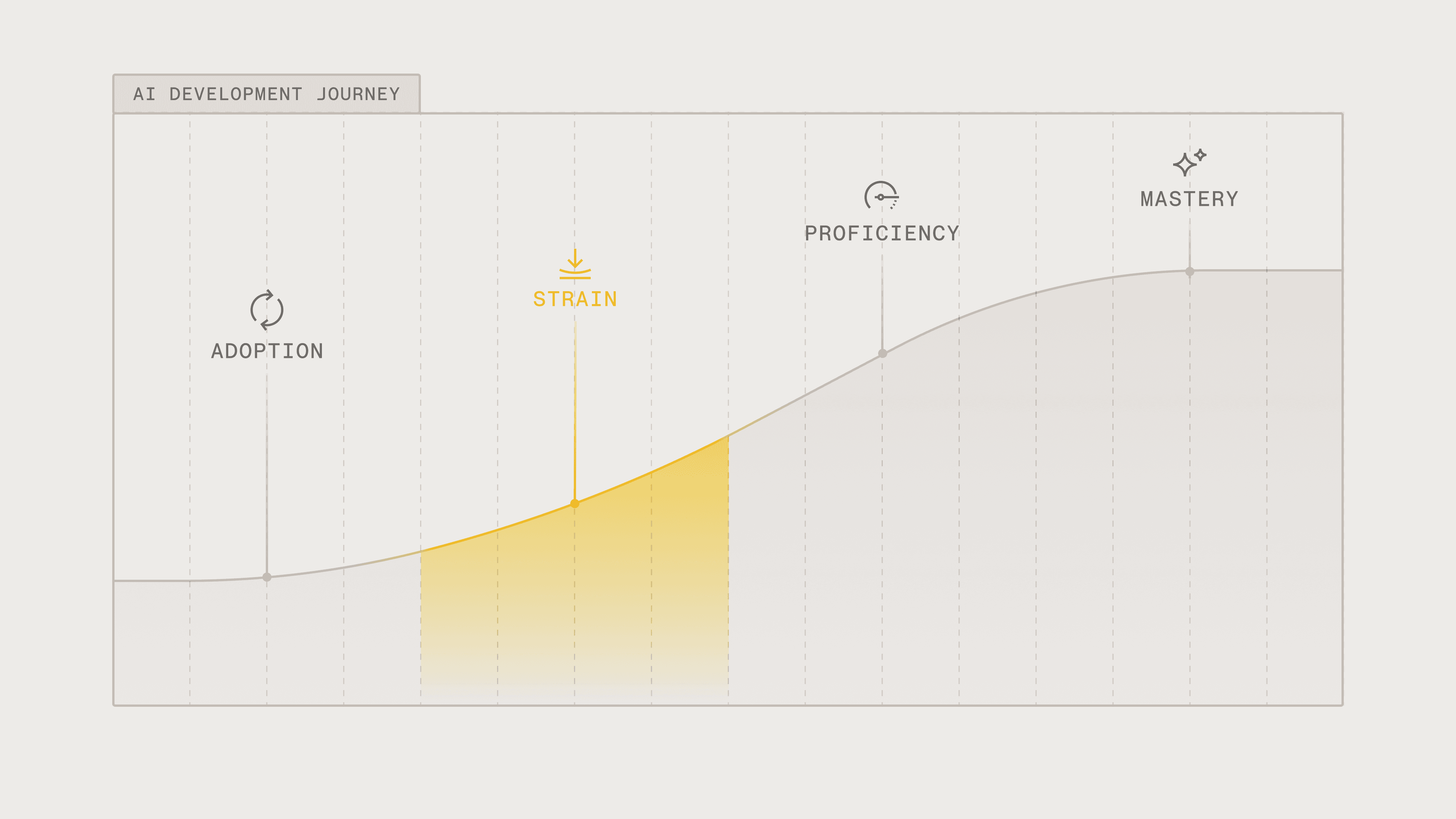

From Strain to Proficiency: How Engineering Orgs Move Past the AI Adoption Valley

From Strain to Proficiency: How Engineering Orgs Move Past the AI Adoption Valley

Span Team

•

Most engineering orgs have adopted AI. But now, they're stuck in a phase where AI output is scaling faster than the systems designed to verify and review it without sacrificing quality.

We call this the “Strain” stage of the Span AI Maturity Framework. At this stage, teams are watching as their throughput numbers accelerate, but the outcomes they assumed would come with AI adoption haven’t followed.

Span’s AI Maturity Framework: The 4 Steps of AI-Driven Development

First, it’s important to know that our AI Maturity Framework covers four distinct stages:

Stage 1: AI Adoption

Organizations have just adopted their first set of tools and AI usage varies across the organization (often widely). Overall, AI adoption rates climb to around 20 to 60%. But adoption rate alone doesn't tell the full story — there's a meaningful difference between teams using AI for basic autocomplete and code suggestions versus teams that are delegating substantial, multi-step work to AI agents.

Most organizations at this stage skew heavily toward the former, even if their dashboards report high adoption numbers. Engineers may have adopted AI coding tools, but the overall focus is mostly on how many engineers have access versus what they’re using the tools for.

Stage 2: Strain

Strain is the phase where AI adoption is high (60–85%) but lead times are slowing, quality is declining, and costs are rising because AI-generated code is scaling faster than the processes designed to verify it. Organizations are producing more code but delivering less value, and they can't pinpoint why.

What makes Strain particularly hard to diagnose is that the type of AI usage has often shifted without leadership noticing. Early adoption tends to be lightweight — autocomplete, inline suggestions, small code completions. But as teams grow comfortable, engineers begin treating AI tools less like assistants and more like coworkers, delegating substantial tasks like multi-file refactors, feature scaffolding, or test generation.

This is a natural progression, but it changes the risk profile dramatically. The guardrails that worked when AI was suggesting single lines of code don't hold when it's producing entire features — and most organizations in Strain haven't evolved those guardrail designs to match.

Stage 3: Proficiency

Teams that have implemented the right guardrails move beyond organizational Strain. This is typically a stage where thanks to early guardrails, shipping velocity is increasing along with quality of output. Given that AI tool adoption and usage is generally dominant across the organization, costs are high. However, these costs are understood and, in most cases, intentional.

The defining characteristic of this AI Proficiency stage is that engineering leadership not only has visibility into what AI is actually doing to delivery, quality, and cost – they can also make decisions accordingly.

Stage 4: Mastery

Very few organizations have reached the Mastery stage. The entire engineering organization is shipping not only at high velocity, but also high quality with cost-efficient AI usage across the full development life cycle. The organization has essentially learned how to fully steer their engineering teams in their AI-driven development cycle.

Most Orgs Are Stuck in the Strain Phase

The challenge with Strain is that it often hides behind seemingly encouraging surface metrics. Teams are initially excited as they watch the number of merged PRs and code volume climb rapidly, with many of their AI adoption dashboards showing green across the board. From a distance, it looks like the investment is paying off.

But the data tells a different story.

Greptile's State of AI Coding report found that median PR size increased 33% over 2025 and lines of code per developer nearly doubled — from 4,450 to 7,839. But despite producing dramatically more code, engineering teams everywhere were actually seeing productivity decline. CircleCI's 2026 State of Software Delivery report, which analyzed over 28 million workflows, found that despite a 59% increase in engineering throughput, most organizations are leaving the majority of those gains on the table because the systems downstream of code generation haven't kept up.

The gap between perception and reality is also significant. In a randomized controlled trial by METR, experienced open-source developers using AI tools took 19% longer to complete tasks while believing they were 20% faster.

The 2025 DORA Report explains why this pattern plays out at an industry level: AI doesn't fix organizations. Rather, it amplifies what's already there, meaning that it magnifies the strengths of high-performing teams and the weaknesses of struggling ones. The report found no correlation between adoption alone and organizational performance.

The teams that benefit from AI are the ones with strong engineering foundations — robust testing, mature version control, fast feedback loops. The ones without those foundations (or those that fail to maintain them) see speed turn into instability.

What Does Strain Look Like for Engineering Orgs?

Symptoms of Strain tend to show up together:

Review queues are growing faster than PR volume

Code output has surged, but reviewers haven't doubled. When PR sizes increase 33% and per-developer output nearly doubles, review capacity becomes the binding constraint. PRs sit for days, and the bottleneck shifts from writing to verifying.

AI-generated code is creating more issues, not fewer

CodeRabbit's analysis of 470 open-source PRs found that AI-co-authored code contains roughly 1.7x more issues per PR than human-written code. Critical and major defects, including business logic errors, misconfigurations, unsafe control flow, are 1.4–1.7x higher.

Senior engineers are reviewing more than shipping code

When reviewers don't fully trust AI-generated output, they over-scrutinize. This often leads to a trust gap where engineers spend more time reviewing code than they do building features. The Stack Overflow 2025 Developer Survey found that only 29 to 46% of developers trust AI-generated output, even as more teams continue to adopt AI. Consequently, the most experienced engineers are spending more time verifying code rather than designing systems.

The review burden compounds when AI usage has quietly shifted from suggestions to delegation. A PR where AI autocompletes a few lines looks and reviews like human code. A PR where an agent scaffolded an entire feature requires a fundamentally different kind of review, and most teams haven't distinguished between the two in their process.

Teams feel fast, but they’re not shipping features faster

This is the defining tension of Strain. Individual engineers feel more productive, but the organizational metrics don't reflect increased productivity. While the throughput gains are most visible at the code generation layer, they're actually being absorbed by friction in review, testing, and deployment.

How Engineering Organizations Become Proficient in AI-Driven Development

Strain is predictable and temporary — if you make deliberate decisions to move past it. The organizations we've watched reach Proficiency and beyond share a common thread: they stopped optimizing for adoption and started building the systems that make AI's impact visible, verifiable, and manageable.

It comes down to a few key changes:

Measuring AI impact vs. adoption

Knowing that 70% of your engineers use AI tools tells you nothing about whether those tools are producing value. The questions that matter in Strain are different: Is AI-generated code correlated with faster delivery or more rework? Which teams are applying AI effectively versus superficially? What does quality look like for AI-assisted PRs versus human-only? You need instrumentation that connects AI activity to downstream outcomes.

Build solid verification systems

You can't solve a significant increase in code volume with a 10% improvement in reviewer speed. Automated quality guardrails — linting, type checking, test coverage, AI-assisted review — need to handle mechanical verification before a human ever looks at a PR. Reserve human review for architecture, business logic, and judgment calls.

Govern AI spend like headcount

There is also increasing support for building FinOps-style governance for AI token spend. That means visibility into cost by team, tool, and model — and the discipline to match the right model to the right task rather than defaulting to the most expensive option for everything.

How Orgs Are Moving Past Strain with Span

Two organizations that have partnered with Span demonstrate what the Strain-to-Proficiency transition looks like in practice.

Coursedog

Coursedog, which serves over 400 campuses and 3.3 million students, needed clear, actionable data into how AI impacted the execution, quality, and overall health of their development lifecycle. This would not only allow them to scale their AI-driven development workflows, but to also move beyond the fragmented data collection processes you typically see with orgs in the Strain phase.

By the end of 2025:

Weekly AI adoption across Coursedog surged from

30 to 95%PR throughput rose roughly

40%year-over-year without sacrificing qualityCoursedog had implemented appropriated guardrails after Span surfaced that rework increased approximately

25%in a single quarter

If you read our Coursedog case study, you'll notice that the company ended 2025 with growth metrics that put their engineering organization in the “AI Proficiency” category.

SecurityScorecard

Similarly, SecurityScorecard faced an analogous visibility challenge with engineering time allocation. Before Span, estimates of how time split across features, bugs, and maintenance didn't match reality. Among the findings, Span revealed that 50% of engineering time was going to bug fixes.

That data enabled a strategic reallocation that led to:

Developer time spent on new features doubling from

25 to 50%Quarterly roadmap attainment nearly doubling from

41 to 80%

These numbers are typically what most engineering organizations seek when they adopt and invest in AI. You can read about how SecurityScorecard hit those numbers with Span in our SecurityScorecard case study.

Moving Towards AI Mastery

Strain isn't a sign of failure. It's the natural consequence of adopting a technology that changes what gets produced before the organization has adapted how it verifies, measures, and manages that output. Every engineering organization that adopts AI at scale will pass through this phase.

How long you stay there, however, is another question entirely.

The organizations making their way through the AI Maturity Framework aren't the ones with the best AI tools or the highest adoption rates. They're the ones that can see what those tools are actually doing and make informed data-driven decisions based on what they find.

That visibility is what Span provides.

Everything you need to unlock engineering excellence

Everything you need to unlock engineering excellence